How to Fix Missing Smart Forms and Empty Catalog Pages in Oracle Fusion Cloud Procurement After an Environment Refresh

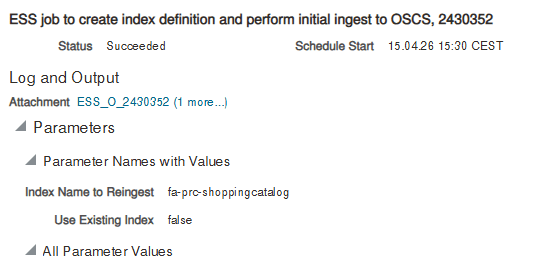

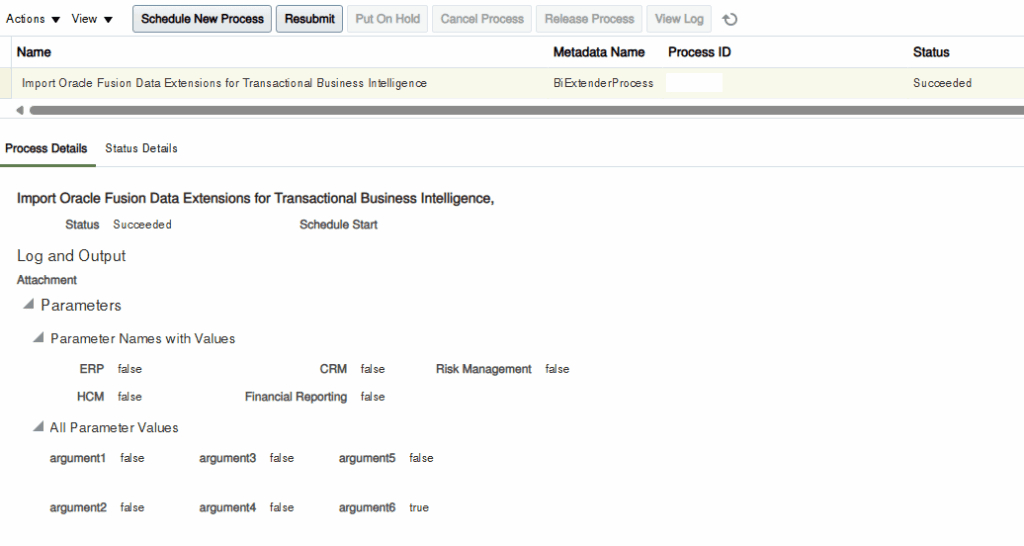

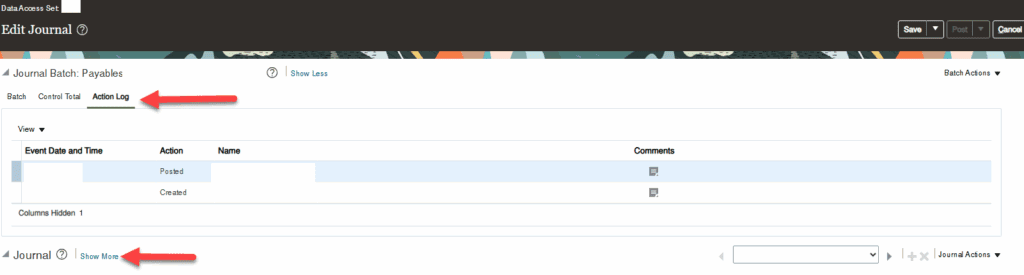

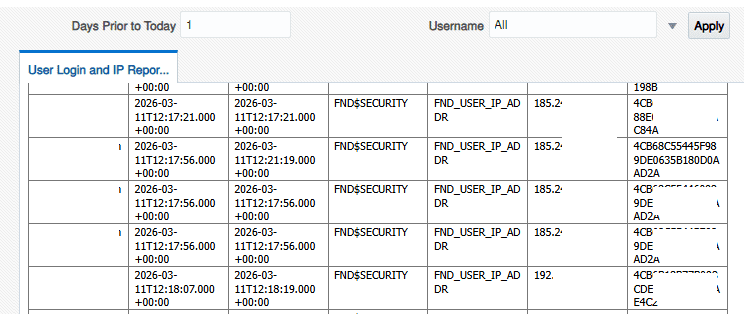

Any team running Oracle Fusion Cloud Procurement across multiple environments will eventually face the same post-refresh puzzle: a user who works perfectly in Production suddenly cannot find their Smart Form in the test environment, even though the refresh was taken from Production only weeks ago. The symptoms are very specific: The user has all the […]